MRI AI Model Classifies Common Intracranial Tumors

|

By HospiMedica International staff writers Posted on 07 Sep 2021 |

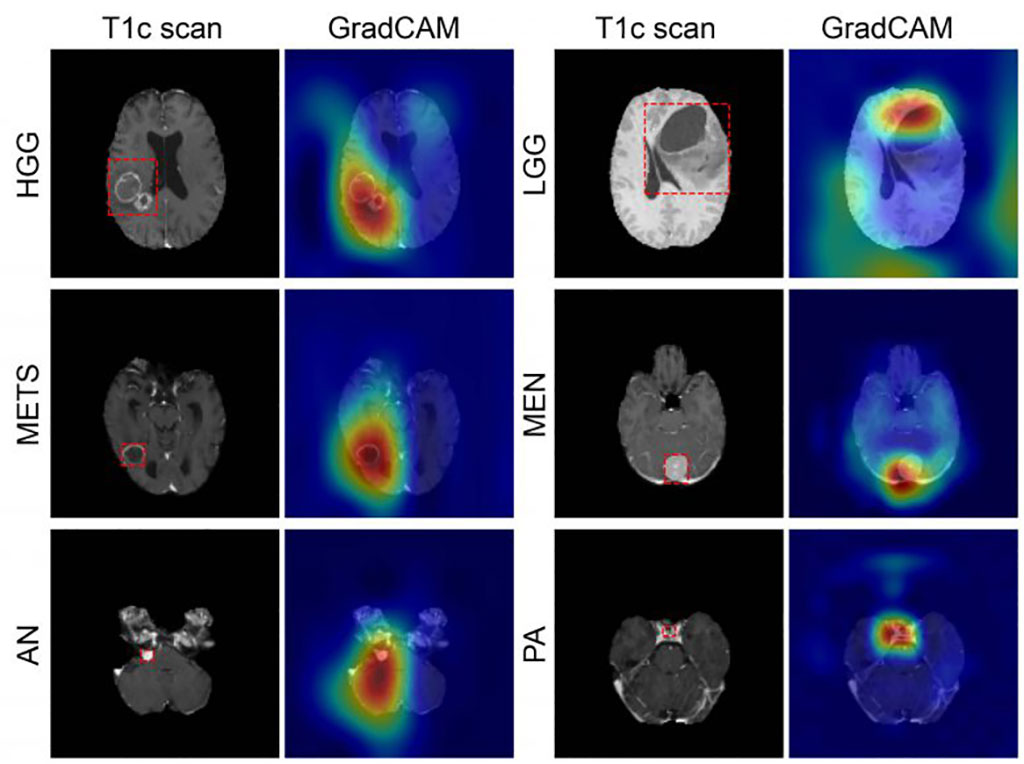

Image: GradCAM color maps colors showing tumor prediction (Photo courtesy of WUSTL)

An artificial intelligence (AI) 3D model is capable of classifying a brain tumor as one of six common types from a single magnetic resonance imaging (MRI) scan, claims a new study.

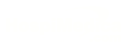

To develop the GradCAM algorithm, researchers at Washington University (WUSTL; St. Louis, MO, USA), used 2,105 T1-weighted MRI scans from four publicly available datasets, split into training (1396), internal (361), and an external (348) datasets. A convolutional neural network (CNN) was trained to discriminate between healthy scans and those with tumors, classified by type (high grade glioma, low grade glioma, brain metastases, meningioma, pituitary adenoma, and acoustic neuroma). Performance of the model was then evaluated, with feature maps plotted to visualize network attention.

The internal test results showed GradCAM achieved an accuracy of 93.35% across seven imaging classes (a healthy class and six tumor classes). Sensitivities ranged from 91% to 100%, and positive predictive value (PPV) ranged from 85% to 100%. Negative predictive value (NPV) ranged from 98% to 100% across all classes. Network attention overlapped with the tumor areas for all tumor types. For the external test dataset, which included only two tumor types (high-grade glioma and low-grade glioma), GradCAM had an accuracy of 91.95%. The study was published on August 11, 2021, in Radiology: Artificial Intelligence.

“These results suggest that deep learning is a promising approach for automated classification and evaluation of brain tumors. The model achieved high accuracy on a heterogeneous dataset and showed excellent generalization capabilities on unseen testing data,” said lead author Satrajit Chakrabarty, MSc, of the department of electrical and systems engineering. “This network is the first step toward developing an artificial intelligence-augmented radiology workflow that can support image interpretation by providing quantitative information and statistics.”

Deep learning is part of a broader family of AI machine learning methods based on learning data representations, as opposed to task specific algorithms. It involves CNN algorithms that use a cascade of many layers of nonlinear processing units for feature extraction, conversion, and transformation, with each successive layer using the output from the previous layer as input to form a hierarchical representation.

Related Links:

Washington University

To develop the GradCAM algorithm, researchers at Washington University (WUSTL; St. Louis, MO, USA), used 2,105 T1-weighted MRI scans from four publicly available datasets, split into training (1396), internal (361), and an external (348) datasets. A convolutional neural network (CNN) was trained to discriminate between healthy scans and those with tumors, classified by type (high grade glioma, low grade glioma, brain metastases, meningioma, pituitary adenoma, and acoustic neuroma). Performance of the model was then evaluated, with feature maps plotted to visualize network attention.

The internal test results showed GradCAM achieved an accuracy of 93.35% across seven imaging classes (a healthy class and six tumor classes). Sensitivities ranged from 91% to 100%, and positive predictive value (PPV) ranged from 85% to 100%. Negative predictive value (NPV) ranged from 98% to 100% across all classes. Network attention overlapped with the tumor areas for all tumor types. For the external test dataset, which included only two tumor types (high-grade glioma and low-grade glioma), GradCAM had an accuracy of 91.95%. The study was published on August 11, 2021, in Radiology: Artificial Intelligence.

“These results suggest that deep learning is a promising approach for automated classification and evaluation of brain tumors. The model achieved high accuracy on a heterogeneous dataset and showed excellent generalization capabilities on unseen testing data,” said lead author Satrajit Chakrabarty, MSc, of the department of electrical and systems engineering. “This network is the first step toward developing an artificial intelligence-augmented radiology workflow that can support image interpretation by providing quantitative information and statistics.”

Deep learning is part of a broader family of AI machine learning methods based on learning data representations, as opposed to task specific algorithms. It involves CNN algorithms that use a cascade of many layers of nonlinear processing units for feature extraction, conversion, and transformation, with each successive layer using the output from the previous layer as input to form a hierarchical representation.

Related Links:

Washington University

Latest MRI News

Channels

Artificial Intelligence

view channelAI Analysis of Pericardial Fat Refines Long-Term Heart Disease Risk

Accurately identifying long-term cardiovascular disease risk in asymptomatic adults remains challenging for clinicians. Missed or underestimated risk delays preventive therapy and increases the chance... Read more

Machine Learning Approach Enhances Liver Cancer Risk Stratification

Hepatocellular carcinoma, the most common form of primary liver cancer, is often detected late despite targeted surveillance programs. Current screening guidelines emphasize patients with known cirrhosis,... Read moreCritical Care

view channel

Noninvasive Monitoring Device Enables Earlier Intervention in Heart Failure

Hospitalizations for heart failure with preserved ejection fraction (HFpEF) remain common because lung congestion often worsens before symptoms prompt treatment changes. Missed early decompensation... Read more

Automated IV Labeling Solution Improves Infusion Safety and Efficiency

Medication administration in high-acuity settings is often complicated by multiple concurrent infusions, making accurate line identification essential. In a 10-hospital intensive care unit study, 60% of... Read moreSurgical Techniques

view channel

Ultrasound Technology Aims to Replace Invasive BPH Procedures

Benign prostatic hyperplasia (BPH) is a frequent cause of lower urinary tract symptoms in aging men and often requires invasive procedures or prolonged recovery. With prevalence expected to rise as populations... Read more

Continuous Monitoring with Wearables Enhances Postoperative Patient Safety

Postoperative hypoxemia on general surgical wards is common and often missed by intermittent vital sign checks. Undetected low oxygen levels can delay recovery and raise the risk of complications that... Read morePatient Care

view channel

Wearable Sleep Data Predict Adherence to Pulmonary Rehabilitation

Chronic obstructive pulmonary disease (COPD) is a long-term lung disorder that makes breathing difficult and often disturbs sleep, reducing energy for daily activities. Limited engagement in pulmonary... Read more

Revolutionary Automatic IV-Line Flushing Device to Enhance Infusion Care

More than 80% of in-hospital patients receive intravenous (IV) therapy. Every dose of IV medicine delivered in a small volume (<250 mL) infusion bag should be followed by subsequent flushing to ensure... Read moreHealth IT

view channel

EMR-Based Tool Predicts Graft Failure After Kidney Transplant

Kidney transplantation offers patients with end-stage kidney disease longer survival and better quality of life than dialysis, yet graft failure remains a major challenge. Although a successful transplant... Read more

Printable Molecule-Selective Nanoparticles Enable Mass Production of Wearable Biosensors

The future of medicine is likely to focus on the personalization of healthcare—understanding exactly what an individual requires and delivering the appropriate combination of nutrients, metabolites, and... Read moreBusiness

view channel