New Artificial Intelligence Method Helps Design Better COVID-19 Antibody Drugs

|

By HospiMedica International staff writers Posted on 20 Apr 2021 |

Illustration

Machine learning methods can help to optimize the development of COVID-19 antibody drugs, leading to active substances with improved properties, also with regard to tolerability in the body, according to researchers.

Scientists at ETH Zürich (Zürich, Switzerland) have developed a machine learning method that supports the optimization phase, helping to develop more effective antibody drugs. Antibodies are not only produced by our immune cells to fight viruses and other pathogens in the body. For a few decades now, medicine has also been using antibodies produced by biotechnology as drugs. This is because antibodies are extremely good at binding specifically to molecular structures according to the lock-and-key principle. Their use ranges from oncology to the treatment of autoimmune diseases and neurodegenerative conditions.

However, developing such antibody drugs is anything but simple. The basic requirement is for an antibody to bind to its target molecule in an optimal way. At the same time, an antibody drug must fulfill a host of additional criteria. For example, it should not trigger an immune response in the body, it should be efficient to produce using biotechnology, and it should remain stable over a long period of time. Once scientists have found an antibody that binds to the desired molecular target structure, the development process is far from over. Rather, this marks the start of a phase in which researchers use bioengineering to try to improve the antibody’s properties.

When researchers optimize an entire antibody molecule in its therapeutic form (i.e. not just a fragment of an antibody), it used to start with an antibody lead candidate that binds reasonably well to the desired target structure. Then researchers randomly mutate the gene that carries the blueprint for the antibody in order to produce a few thousand related antibody candidates in the lab. The next step is to search among them to find the ones that bind best to the target structure. The ETH researchers are now using machine learning to increase the initial set of antibodies to be tested to several million.

The researchers provided the proof of concept for their new method using Roche’s antibody cancer drug Herceptin, which has been on the market for 20 years. Starting out from the DNA sequence of the Herceptin antibody, the ETH researchers created about 40,000 related antibodies using a CRISPR mutation method they developed a few years ago. Experiments showed that 10,000 of them bound well to the target protein in question, a specific cell surface protein. The scientists used the DNA sequences of these 40,000 antibodies to train a machine learning algorithm. They then applied the trained algorithm to search a database of 70 million potential antibody DNA sequences. For these 70 million candidates, the algorithm predicted how well the corresponding antibodies would bind to the target protein, resulting in a list of millions of sequences expected to bind.

Using further computer models, the scientists predicted how well these millions of sequences would meet the additional criteria for drug development (tolerance, production, physical properties). This reduced the number of candidate sequences to 8,000. From the list of optimized candidate sequences on their computer, the scientists selected 55 sequences from which to produce antibodies in the lab and characterize their properties. Subsequent experiments showed that several of them bound even better to the target protein than Herceptin itself, as well as being easier to produce and more stable than Herceptin. The ETH scientists are now applying their AI method to optimize antibody drugs that are in clinical development.

“With automated processes, you can test a few thousand therapeutic candidates in a lab. But it is not really feasible to screen any more than that,” said Sai Reddy, a professor at the Department of Biosystems Science and Engineering at ETH Zurich who led the study. “Typically, the best dozen antibodies from this screening move on to the next step and are tested for how well they meet additional criteria. “Ultimately, this approach lets you identify the best antibody from a group of a few thousand.”

Related Links:

ETH Zürich

Scientists at ETH Zürich (Zürich, Switzerland) have developed a machine learning method that supports the optimization phase, helping to develop more effective antibody drugs. Antibodies are not only produced by our immune cells to fight viruses and other pathogens in the body. For a few decades now, medicine has also been using antibodies produced by biotechnology as drugs. This is because antibodies are extremely good at binding specifically to molecular structures according to the lock-and-key principle. Their use ranges from oncology to the treatment of autoimmune diseases and neurodegenerative conditions.

However, developing such antibody drugs is anything but simple. The basic requirement is for an antibody to bind to its target molecule in an optimal way. At the same time, an antibody drug must fulfill a host of additional criteria. For example, it should not trigger an immune response in the body, it should be efficient to produce using biotechnology, and it should remain stable over a long period of time. Once scientists have found an antibody that binds to the desired molecular target structure, the development process is far from over. Rather, this marks the start of a phase in which researchers use bioengineering to try to improve the antibody’s properties.

When researchers optimize an entire antibody molecule in its therapeutic form (i.e. not just a fragment of an antibody), it used to start with an antibody lead candidate that binds reasonably well to the desired target structure. Then researchers randomly mutate the gene that carries the blueprint for the antibody in order to produce a few thousand related antibody candidates in the lab. The next step is to search among them to find the ones that bind best to the target structure. The ETH researchers are now using machine learning to increase the initial set of antibodies to be tested to several million.

The researchers provided the proof of concept for their new method using Roche’s antibody cancer drug Herceptin, which has been on the market for 20 years. Starting out from the DNA sequence of the Herceptin antibody, the ETH researchers created about 40,000 related antibodies using a CRISPR mutation method they developed a few years ago. Experiments showed that 10,000 of them bound well to the target protein in question, a specific cell surface protein. The scientists used the DNA sequences of these 40,000 antibodies to train a machine learning algorithm. They then applied the trained algorithm to search a database of 70 million potential antibody DNA sequences. For these 70 million candidates, the algorithm predicted how well the corresponding antibodies would bind to the target protein, resulting in a list of millions of sequences expected to bind.

Using further computer models, the scientists predicted how well these millions of sequences would meet the additional criteria for drug development (tolerance, production, physical properties). This reduced the number of candidate sequences to 8,000. From the list of optimized candidate sequences on their computer, the scientists selected 55 sequences from which to produce antibodies in the lab and characterize their properties. Subsequent experiments showed that several of them bound even better to the target protein than Herceptin itself, as well as being easier to produce and more stable than Herceptin. The ETH scientists are now applying their AI method to optimize antibody drugs that are in clinical development.

“With automated processes, you can test a few thousand therapeutic candidates in a lab. But it is not really feasible to screen any more than that,” said Sai Reddy, a professor at the Department of Biosystems Science and Engineering at ETH Zurich who led the study. “Typically, the best dozen antibodies from this screening move on to the next step and are tested for how well they meet additional criteria. “Ultimately, this approach lets you identify the best antibody from a group of a few thousand.”

Related Links:

ETH Zürich

Latest COVID-19 News

- Low-Cost System Detects SARS-CoV-2 Virus in Hospital Air Using High-Tech Bubbles

- World's First Inhalable COVID-19 Vaccine Approved in China

- COVID-19 Vaccine Patch Fights SARS-CoV-2 Variants Better than Needles

- Blood Viscosity Testing Can Predict Risk of Death in Hospitalized COVID-19 Patients

- ‘Covid Computer’ Uses AI to Detect COVID-19 from Chest CT Scans

- MRI Lung-Imaging Technique Shows Cause of Long-COVID Symptoms

- Chest CT Scans of COVID-19 Patients Could Help Distinguish Between SARS-CoV-2 Variants

- Specialized MRI Detects Lung Abnormalities in Non-Hospitalized Long COVID Patients

- AI Algorithm Identifies Hospitalized Patients at Highest Risk of Dying From COVID-19

- Sweat Sensor Detects Key Biomarkers That Provide Early Warning of COVID-19 and Flu

- Study Assesses Impact of COVID-19 on Ventilation/Perfusion Scintigraphy

- CT Imaging Study Finds Vaccination Reduces Risk of COVID-19 Associated Pulmonary Embolism

- Third Day in Hospital a ‘Tipping Point’ in Severity of COVID-19 Pneumonia

- Longer Interval Between COVID-19 Vaccines Generates Up to Nine Times as Many Antibodies

- AI Model for Monitoring COVID-19 Predicts Mortality Within First 30 Days of Admission

- AI Predicts COVID Prognosis at Near-Expert Level Based Off CT Scans

Channels

Artificial Intelligence

view channelAI Analysis of Pericardial Fat Refines Long-Term Heart Disease Risk

Accurately identifying long-term cardiovascular disease risk in asymptomatic adults remains challenging for clinicians. Missed or underestimated risk delays preventive therapy and increases the chance... Read more

Machine Learning Approach Enhances Liver Cancer Risk Stratification

Hepatocellular carcinoma, the most common form of primary liver cancer, is often detected late despite targeted surveillance programs. Current screening guidelines emphasize patients with known cirrhosis,... Read moreCritical Care

view channel

Angiography-Based FFR Approach Matches Gold Standard Results Without Wires

Accurately determining whether a coronary stenosis limits blood flow is essential to guide percutaneous coronary intervention, yet wire-based physiologic testing remains underused due to added procedural... Read more

Eye Imaging AI Identifies Elevated Cardiovascular Risk

Many adults at risk for atherosclerotic cardiovascular disease are not identified until they undergo formal primary care assessment. Delayed risk recognition can postpone initiation of statins and lifestyle... Read moreSurgical Techniques

view channel

Fiber-Form Bone Graft Expands Intraoperative Options for Spinal Fusion

Spinal and orthopedic fusion procedures often require bone graft materials that handle predictably and support bone formation. Surgeons face added complexity in difficult anatomy and challenging fusion environments.... Read more

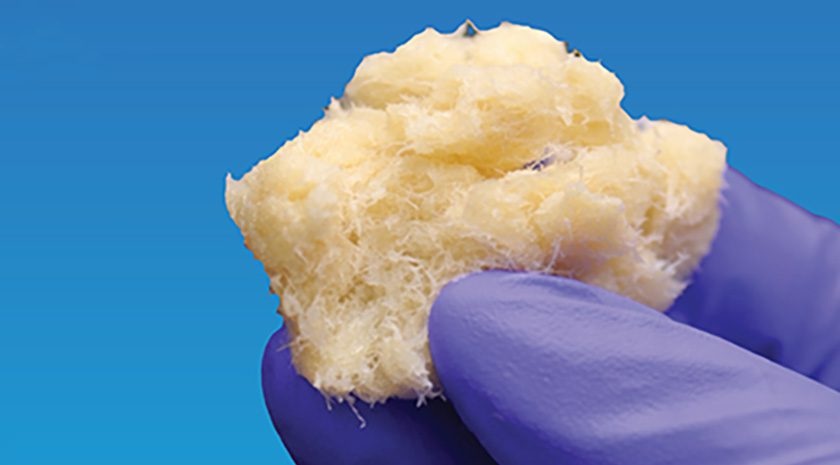

Ultrasound‑Aided Catheter Treatment Cuts Early Collapse in Pulmonary Embolism

Acute pulmonary embolism can cause rapid hemodynamic deterioration and early death in hospitalized and emergency patients. Systemic thrombolysis can dissolve clots but is limited by a high risk of major... Read morePatient Care

view channel

Wearable Sleep Data Predict Adherence to Pulmonary Rehabilitation

Chronic obstructive pulmonary disease (COPD) is a long-term lung disorder that makes breathing difficult and often disturbs sleep, reducing energy for daily activities. Limited engagement in pulmonary... Read more

Revolutionary Automatic IV-Line Flushing Device to Enhance Infusion Care

More than 80% of in-hospital patients receive intravenous (IV) therapy. Every dose of IV medicine delivered in a small volume (<250 mL) infusion bag should be followed by subsequent flushing to ensure... Read moreHealth IT

view channel

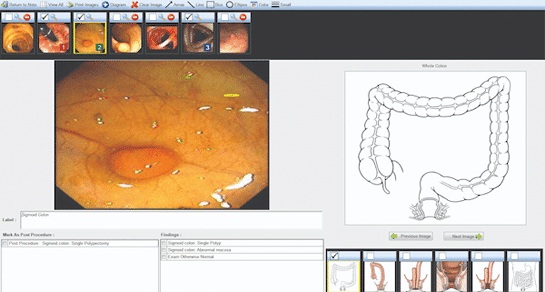

Voice-Driven AI System Enables Structured GI Procedure Documentation

Documentation during gastrointestinal (GI) procedures often competes with real-time clinical decision-making and imposes a significant cognitive burden on physicians. Manual data entry and post-procedure... Read more

EMR-Based Tool Predicts Graft Failure After Kidney Transplant

Kidney transplantation offers patients with end-stage kidney disease longer survival and better quality of life than dialysis, yet graft failure remains a major challenge. Although a successful transplant... Read more

Printable Molecule-Selective Nanoparticles Enable Mass Production of Wearable Biosensors

The future of medicine is likely to focus on the personalization of healthcare—understanding exactly what an individual requires and delivering the appropriate combination of nutrients, metabolites, and... Read moreBusiness

view channel