MRI AI Model Classifies Common Intracranial Tumors

|

By HospiMedica International staff writers Posted on 07 Sep 2021 |

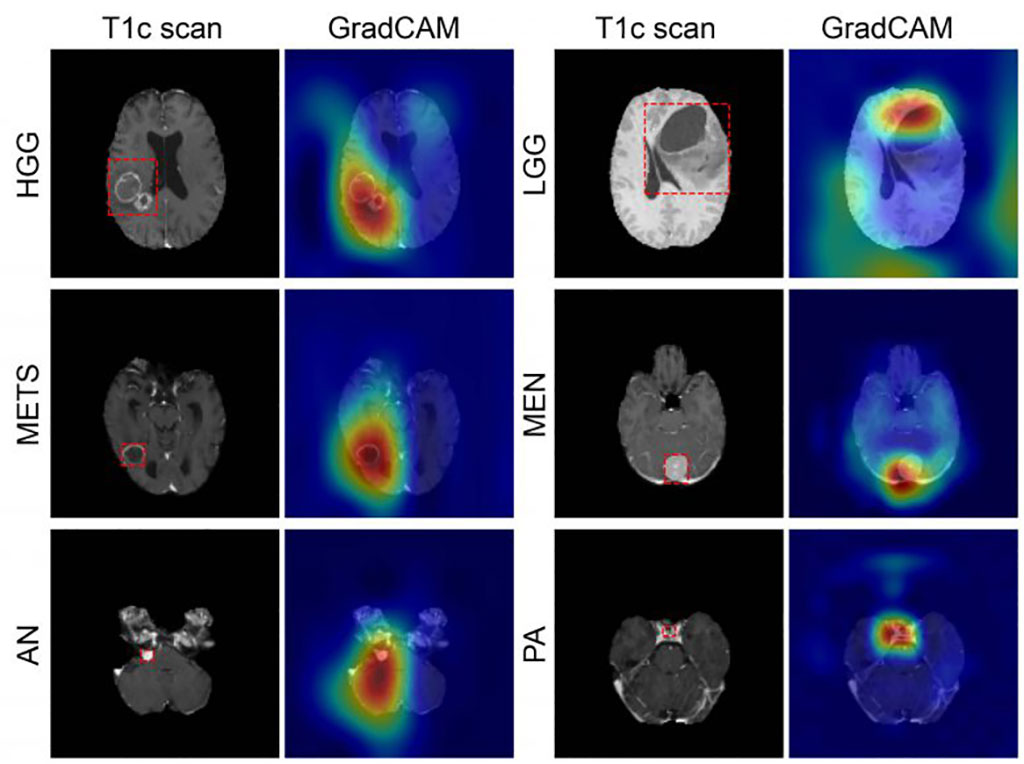

Image: GradCAM color maps colors showing tumor prediction (Photo courtesy of WUSTL)

An artificial intelligence (AI) 3D model is capable of classifying a brain tumor as one of six common types from a single magnetic resonance imaging (MRI) scan, claims a new study.

To develop the GradCAM algorithm, researchers at Washington University (WUSTL; St. Louis, MO, USA), used 2,105 T1-weighted MRI scans from four publicly available datasets, split into training (1396), internal (361), and an external (348) datasets. A convolutional neural network (CNN) was trained to discriminate between healthy scans and those with tumors, classified by type (high grade glioma, low grade glioma, brain metastases, meningioma, pituitary adenoma, and acoustic neuroma). Performance of the model was then evaluated, with feature maps plotted to visualize network attention.

The internal test results showed GradCAM achieved an accuracy of 93.35% across seven imaging classes (a healthy class and six tumor classes). Sensitivities ranged from 91% to 100%, and positive predictive value (PPV) ranged from 85% to 100%. Negative predictive value (NPV) ranged from 98% to 100% across all classes. Network attention overlapped with the tumor areas for all tumor types. For the external test dataset, which included only two tumor types (high-grade glioma and low-grade glioma), GradCAM had an accuracy of 91.95%. The study was published on August 11, 2021, in Radiology: Artificial Intelligence.

“These results suggest that deep learning is a promising approach for automated classification and evaluation of brain tumors. The model achieved high accuracy on a heterogeneous dataset and showed excellent generalization capabilities on unseen testing data,” said lead author Satrajit Chakrabarty, MSc, of the department of electrical and systems engineering. “This network is the first step toward developing an artificial intelligence-augmented radiology workflow that can support image interpretation by providing quantitative information and statistics.”

Deep learning is part of a broader family of AI machine learning methods based on learning data representations, as opposed to task specific algorithms. It involves CNN algorithms that use a cascade of many layers of nonlinear processing units for feature extraction, conversion, and transformation, with each successive layer using the output from the previous layer as input to form a hierarchical representation.

Related Links:

Washington University

To develop the GradCAM algorithm, researchers at Washington University (WUSTL; St. Louis, MO, USA), used 2,105 T1-weighted MRI scans from four publicly available datasets, split into training (1396), internal (361), and an external (348) datasets. A convolutional neural network (CNN) was trained to discriminate between healthy scans and those with tumors, classified by type (high grade glioma, low grade glioma, brain metastases, meningioma, pituitary adenoma, and acoustic neuroma). Performance of the model was then evaluated, with feature maps plotted to visualize network attention.

The internal test results showed GradCAM achieved an accuracy of 93.35% across seven imaging classes (a healthy class and six tumor classes). Sensitivities ranged from 91% to 100%, and positive predictive value (PPV) ranged from 85% to 100%. Negative predictive value (NPV) ranged from 98% to 100% across all classes. Network attention overlapped with the tumor areas for all tumor types. For the external test dataset, which included only two tumor types (high-grade glioma and low-grade glioma), GradCAM had an accuracy of 91.95%. The study was published on August 11, 2021, in Radiology: Artificial Intelligence.

“These results suggest that deep learning is a promising approach for automated classification and evaluation of brain tumors. The model achieved high accuracy on a heterogeneous dataset and showed excellent generalization capabilities on unseen testing data,” said lead author Satrajit Chakrabarty, MSc, of the department of electrical and systems engineering. “This network is the first step toward developing an artificial intelligence-augmented radiology workflow that can support image interpretation by providing quantitative information and statistics.”

Deep learning is part of a broader family of AI machine learning methods based on learning data representations, as opposed to task specific algorithms. It involves CNN algorithms that use a cascade of many layers of nonlinear processing units for feature extraction, conversion, and transformation, with each successive layer using the output from the previous layer as input to form a hierarchical representation.

Related Links:

Washington University

Latest AI News

- AI-Powered Algorithm to Revolutionize Detection of Atrial Fibrillation

- AI Diagnostic Tool Accurately Detects Valvular Disorders Often Missed by Doctors

- New Model Predicts 10 Year Breast Cancer Risk

- AI Tool Accurately Predicts Cancer Three Years Prior to Diagnosis

- Ground-Breaking Tool Predicts 10-Year Risk of Esophageal Cancer

- AI Tool Analyzes Capsule Endoscopy Videos for Accurately Predicting Patient Outcomes for Crohn’s Disease

Channels

Artificial Intelligence

view channel

AI-Powered Algorithm to Revolutionize Detection of Atrial Fibrillation

Atrial fibrillation (AFib), a condition characterized by an irregular and often rapid heart rate, is linked to increased risks of stroke and heart failure. This is because the irregular heartbeat in AFib... Read more

AI Diagnostic Tool Accurately Detects Valvular Disorders Often Missed by Doctors

Doctors generally use stethoscopes to listen for the characteristic lub-dub sounds made by heart valves opening and closing. They also listen for less prominent sounds that indicate problems with these valves.... Read moreCritical Care

view channel

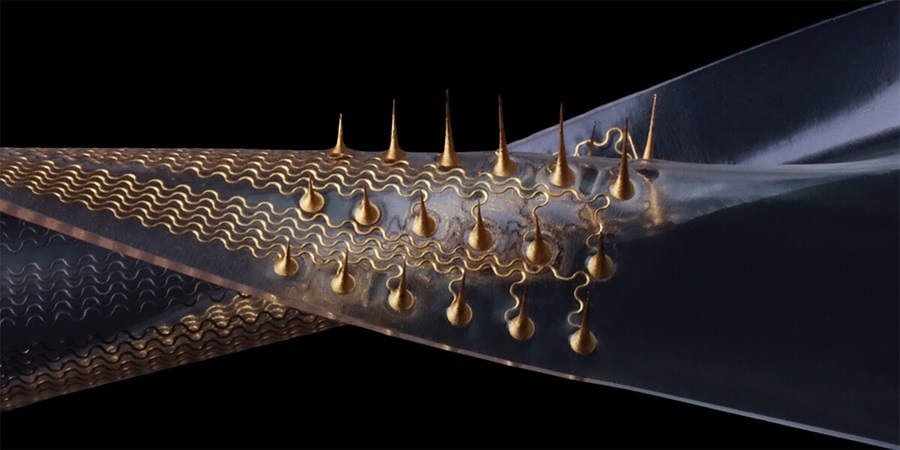

Stretchable Microneedles to Help In Accurate Tracking of Abnormalities and Identifying Rapid Treatment

The field of personalized medicine is transforming rapidly, with advancements like wearable devices and home testing kits making it increasingly easy to monitor a wide range of health metrics, from heart... Read more

Machine Learning Tool Identifies Rare, Undiagnosed Immune Disorders from Patient EHRs

Patients suffering from rare diseases often endure extensive delays in receiving accurate diagnoses and treatments, which can lead to unnecessary tests, worsening health, psychological strain, and significant... Read more

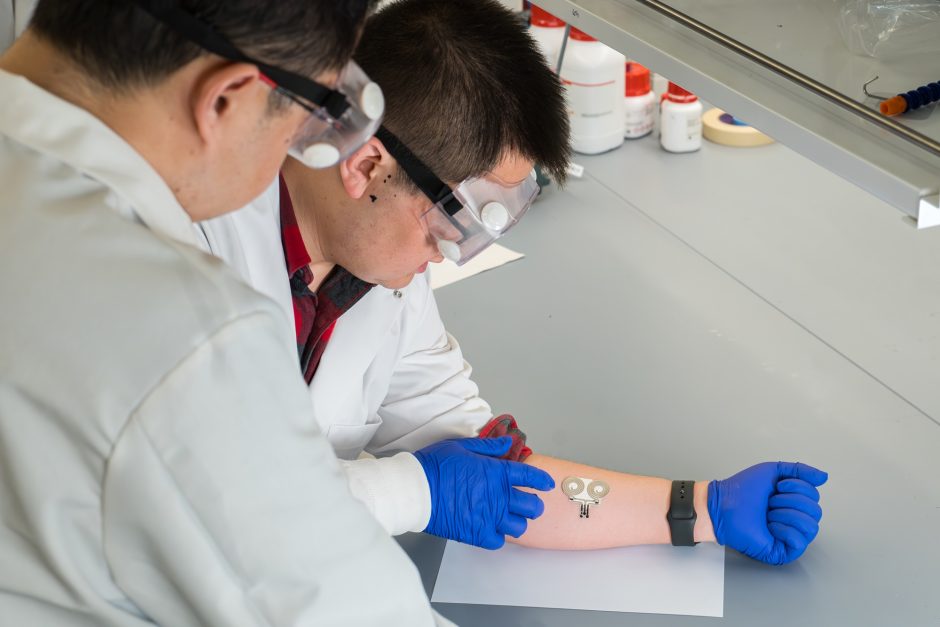

On-Skin Wearable Bioelectronic Device Paves Way for Intelligent Implants

A team of researchers at the University of Missouri (Columbia, MO, USA) has achieved a milestone in developing a state-of-the-art on-skin wearable bioelectronic device. This development comes from a lab... Read more

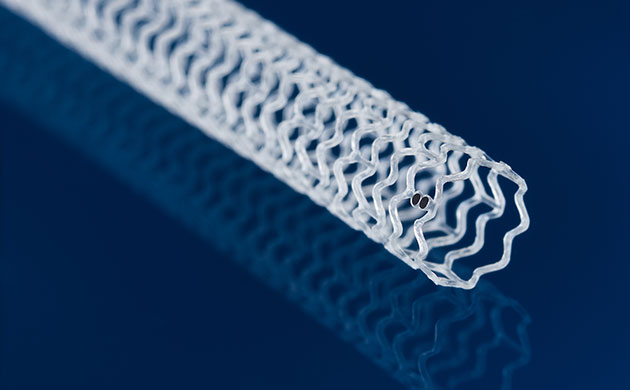

First-Of-Its-Kind Dissolvable Stent to Improve Outcomes for Patients with Severe PAD

Peripheral artery disease (PAD) affects millions and presents serious health risks, particularly its severe form, chronic limb-threatening ischemia (CLTI). CLTI develops when arteries are blocked by plaque,... Read moreSurgical Techniques

view channel

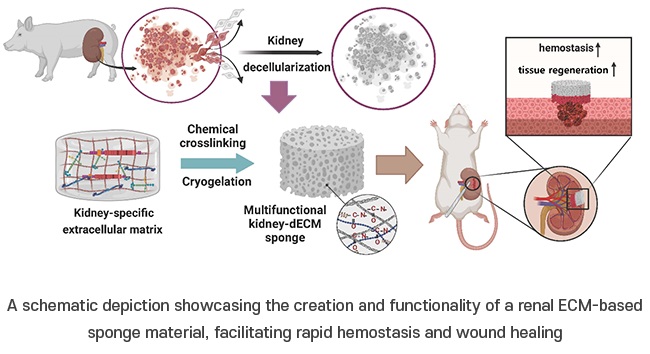

Porous Gel Sponge Facilitates Rapid Hemostasis and Wound Healing

The kidneys are essential organs that handle critical bodily functions, including waste elimination and blood pressure regulation. Often referred to as the silent organ because they typically do not manifest... Read more

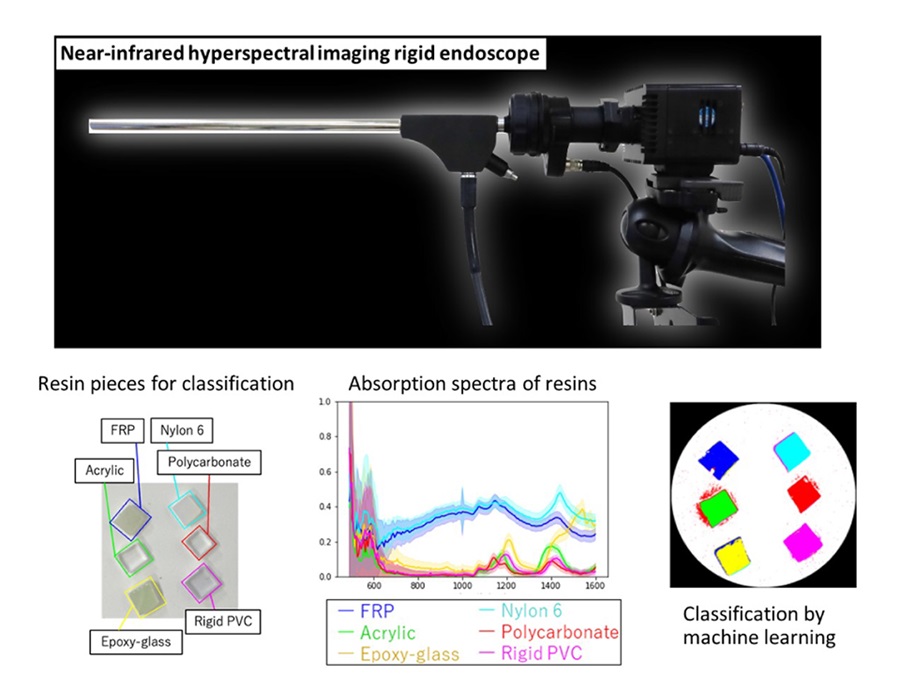

Novel Rigid Endoscope System Enables Deep Tissue Imaging During Surgery

Hyperspectral imaging (HSI) is an advanced technique that captures and processes information across a given electromagnetic spectrum. Near-infrared hyperspectral imaging (NIR-HSI) has particularly gained... Read more

Robotic Nerve ‘Cuffs’ Could Treat Various Neurological Conditions

Electric nerve implants serve dual functions: they can either stimulate or block signals in specific nerves. For example, they may alleviate pain by inhibiting pain signals or restore movement in paralyzed... Read more

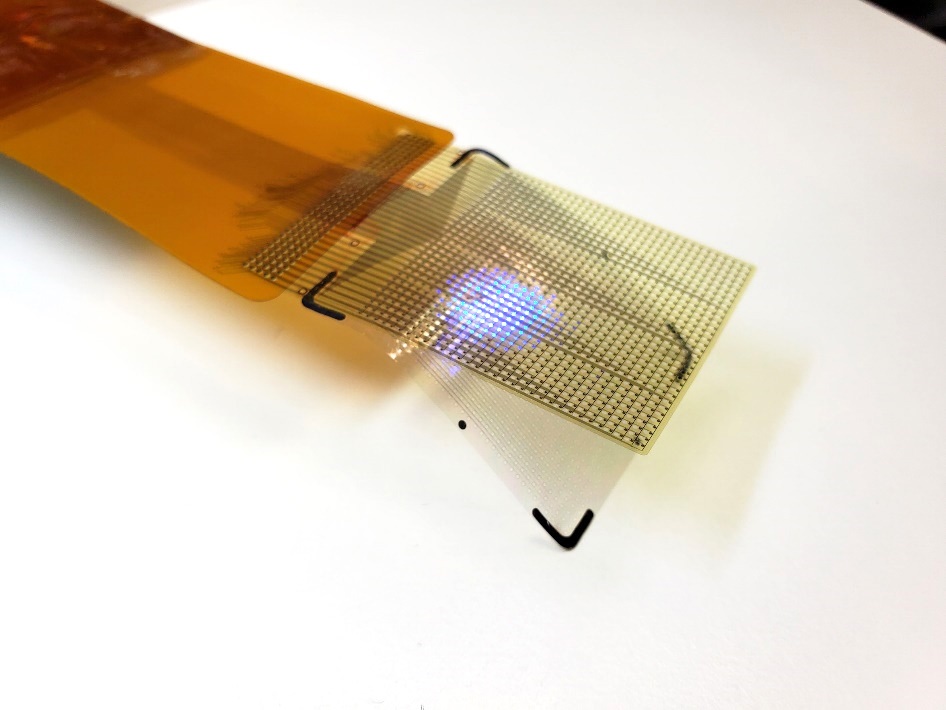

Flexible Microdisplay Visualizes Brain Activity in Real-Time To Guide Neurosurgeons

During brain surgery, neurosurgeons need to identify and preserve regions responsible for critical functions while removing harmful tissue. Traditionally, neurosurgeons rely on a team of electrophysiologists,... Read morePatient Care

view channelFirst-Of-Its-Kind Portable Germicidal Light Technology Disinfects High-Touch Clinical Surfaces in Seconds

Reducing healthcare-acquired infections (HAIs) remains a pressing issue within global healthcare systems. In the United States alone, 1.7 million patients contract HAIs annually, leading to approximately... Read more

Surgical Capacity Optimization Solution Helps Hospitals Boost OR Utilization

An innovative solution has the capability to transform surgical capacity utilization by targeting the root cause of surgical block time inefficiencies. Fujitsu Limited’s (Tokyo, Japan) Surgical Capacity... Read more

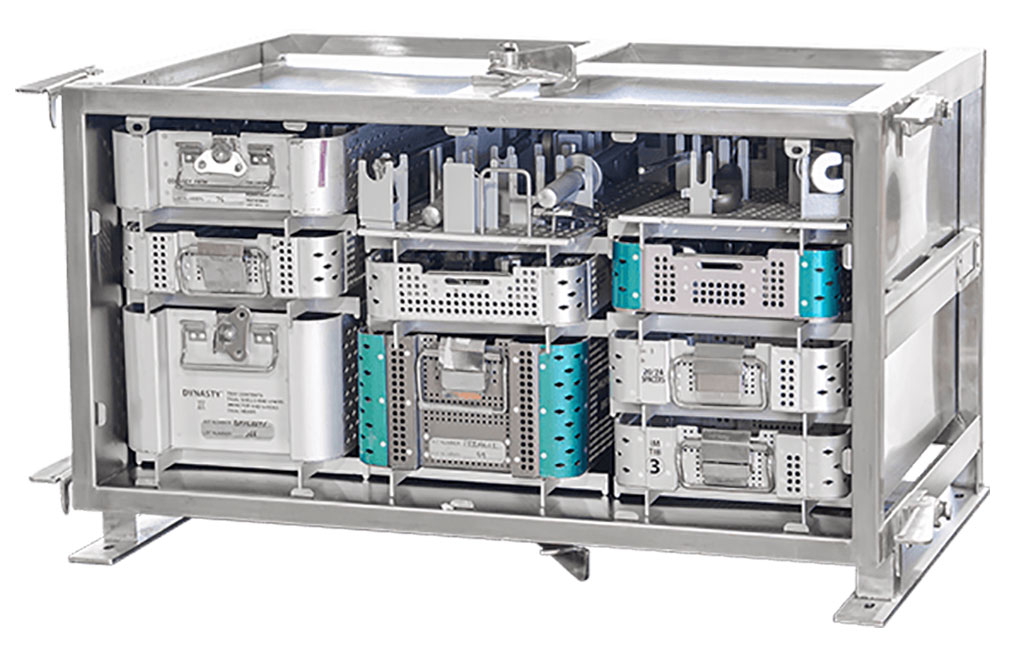

Game-Changing Innovation in Surgical Instrument Sterilization Significantly Improves OR Throughput

A groundbreaking innovation enables hospitals to significantly improve instrument processing time and throughput in operating rooms (ORs) and sterile processing departments. Turbett Surgical, Inc.... Read moreHealth IT

view channel

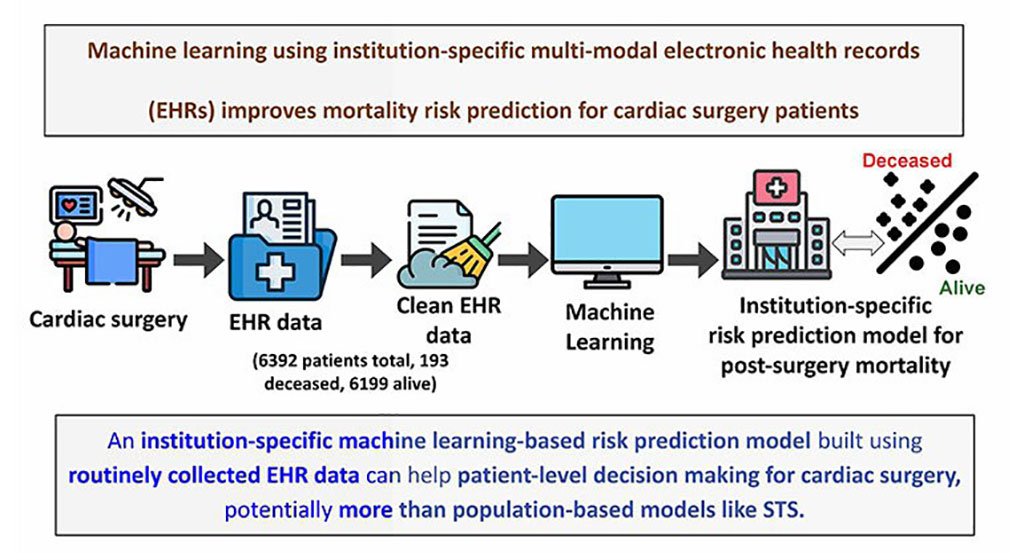

Machine Learning Model Improves Mortality Risk Prediction for Cardiac Surgery Patients

Machine learning algorithms have been deployed to create predictive models in various medical fields, with some demonstrating improved outcomes compared to their standard-of-care counterparts.... Read more

Strategic Collaboration to Develop and Integrate Generative AI into Healthcare

Top industry experts have underscored the immediate requirement for healthcare systems and hospitals to respond to severe cost and margin pressures. Close to half of U.S. hospitals ended 2022 in the red... Read more

AI-Enabled Operating Rooms Solution Helps Hospitals Maximize Utilization and Unlock Capacity

For healthcare organizations, optimizing operating room (OR) utilization during prime time hours is a complex challenge. Surgeons and clinics face difficulties in finding available slots for booking cases,... Read more

AI Predicts Pancreatic Cancer Three Years before Diagnosis from Patients’ Medical Records

Screening for common cancers like breast, cervix, and prostate cancer relies on relatively simple and highly effective techniques, such as mammograms, Pap smears, and blood tests. These methods have revolutionized... Read morePoint of Care

view channel

Critical Bleeding Management System to Help Hospitals Further Standardize Viscoelastic Testing

Surgical procedures are often accompanied by significant blood loss and the subsequent high likelihood of the need for allogeneic blood transfusions. These transfusions, while critical, are linked to various... Read more

Point of Care HIV Test Enables Early Infection Diagnosis for Infants

Early diagnosis and initiation of treatment are crucial for the survival of infants infected with HIV (human immunodeficiency virus). Without treatment, approximately 50% of infants who acquire HIV during... Read more

Whole Blood Rapid Test Aids Assessment of Concussion at Patient's Bedside

In the United States annually, approximately five million individuals seek emergency department care for traumatic brain injuries (TBIs), yet over half of those suspecting a concussion may never get it checked.... Read more

New Generation Glucose Hospital Meter System Ensures Accurate, Interference-Free and Safe Use

A new generation glucose hospital meter system now comes with several features that make hospital glucose testing easier and more secure while continuing to offer accuracy, freedom from interference, and... Read moreBusiness

view channel

Johnson & Johnson Acquires Cardiovascular Medical Device Company Shockwave Medical

Johnson & Johnson (New Brunswick, N.J., USA) and Shockwave Medical (Santa Clara, CA, USA) have entered into a definitive agreement under which Johnson & Johnson will acquire all of Shockwave’s... Read more